Pick quality

After reading Matthias Ott’s brilliant piece on what the work is, my mind returned to something I’ve felt for a while: the forces that pull towards speed contradict those pushing for quality.

In other words: if LLMs are used to increase speed, where’s the incentive to thoroughly review output for quality? Especially given the added complication of cognitive debt.

From Matthias:

The underlying assumption: AI does in seconds what expensive humans take hours to do. Finally!

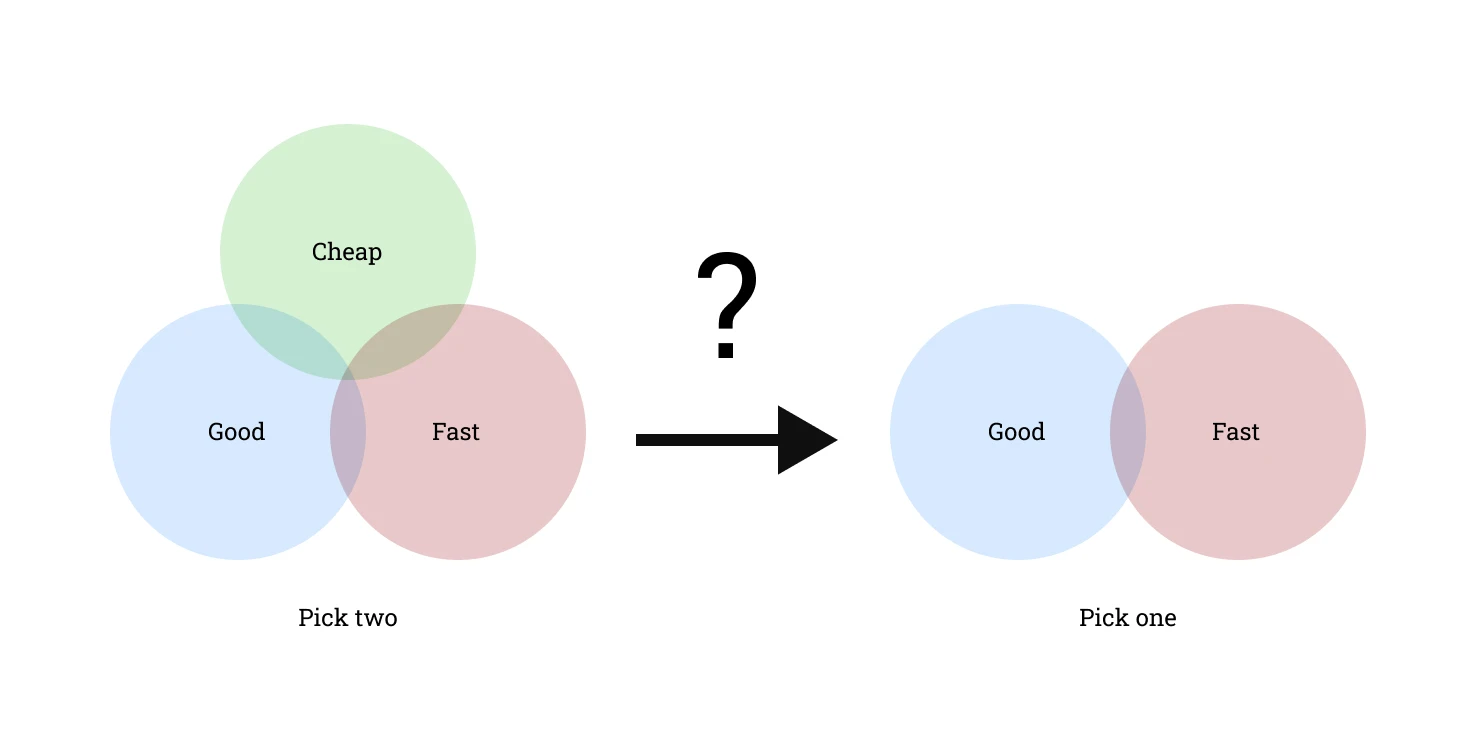

I recently quipped to a friend that we might need to redraw the “good, fast or cheap” triangle to “fast or good: pick one”:

But maybe we don’t.

If LLM use is optimising for cheap, then we’re left with fast or good. If they’re optimising for fast, we’re left with good or cheap.

Either way, LLM use is an attempt to optimise for at least one of these factors. There’s no need to change the diagram.

Of course, LLM proponents are trying to convince us that we no longer need to pick between cheap, fast and good: we can have all three! But I don’t think that’s true, especially on the quality front.

When I see posts hyping the quality of an LLM’s output (“it’s better than anything a human would have coded!”), I’m left with the question: where are the skeletons buried?

What’s the accessibility like? Or security? How many of the other concerns that make you a boring developer have been skipped in the name of speed?

Posted in:

- Ethics

- Journal

- Process